Cheryl Tan

On the 21st of October 2019, Google’s search engine rolled out its latest update for English language queries: the Bidirectional Encoder Representations from Transformers (BERT) algorithm. It impacts approximately 1 out of 10 queries—the informational queries in particular. If it hasn’t impacted your site yet, rest assured it eventually will as your traffic grows.

So, what is BERT, how does it work, and what can you do? Here’s everything you need to know about BERT, and how you can improve your site’s content to accommodate one of the biggest Google algorithm updates to date!

Unlike previous algorithms, BERT was created to have better Natural Language Processing (NLP). This is the way in which computers understand and communicate with humans and a component of Artificial Intelligence (AI). BERT is both the first fully trained language model and the first bidirectional contextual language model, meaning that it is the most significant breakthrough model in NLP so far.

In short, BERT helps improve Search by helping Google understand the connotation of a question to better match questions with related answers.

Written words are ambiguous and polysemantic (i.e. has many meanings). When spoken, different tones inflected can also result in different meanings. In addition, people tend to type in incomplete sentences without proper punctuation according to how they think. BERT provides context and helps Google better understand human language in conversational English.

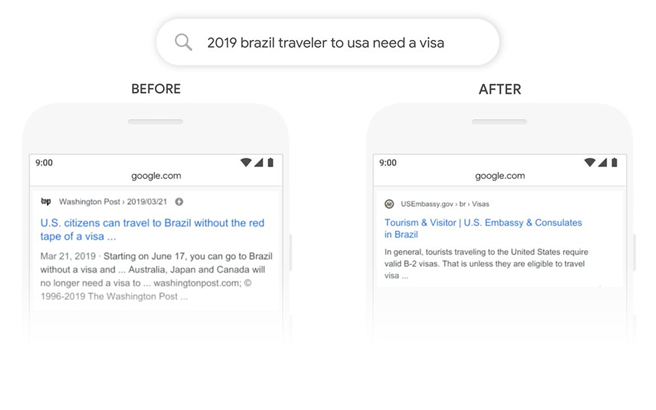

Let’s look at an example given by Google. In this search for “2019 brazil traveler to usa need a visa”, the word “to” is critical to understanding the question as it is about someone travelling from Brazil to the US, instead of the other way around. With BERT, the algorithm understands the intent behind this statement, and the results produced are much more relevant to the query than before.

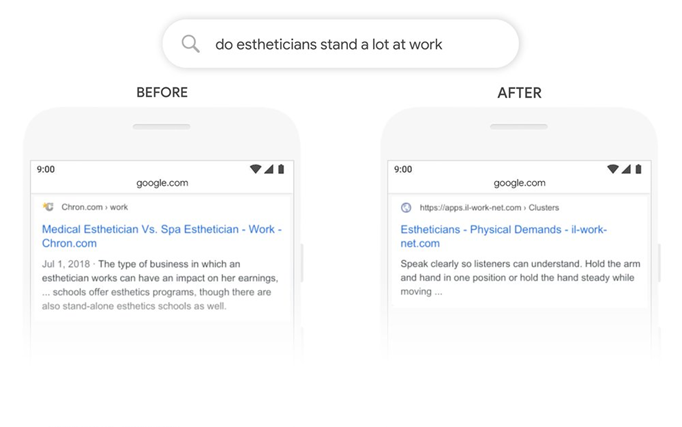

Another example would be this search for “do estheticians stand a lot at work”, with the focus of the question being on the physical demands of the job. Previously, the engine understood and matched “stand” to the term “stand-alone”, which was not the proper use of the word in the context of which it was asked. With BERT, the meaning behind the query is understood, and much more useful responses are displayed instead.

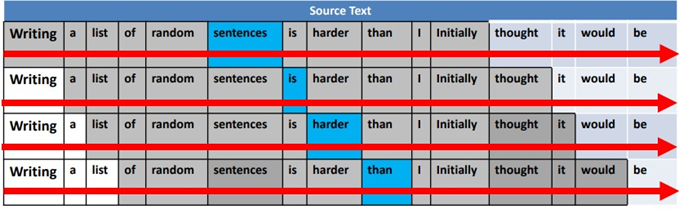

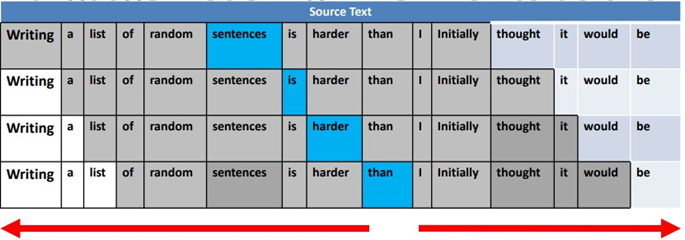

Previously, all language models could only move from left to right or right to left in one direction, not both at the same time.

As the first bi-directional contextual language model, BERT processes words in relation to other words in a sentence, instead of looking at each word in order, helping provide much better context to a query.

Because language isn’t a structured set of data, it is very difficult for traditional computer processes to understand it. With the addition of BERT to provide context to ambiguous questions, Google will change the way it interprets the queries, and subsequently the results it returns as well.

Users often ask questions online in obscure ways without any context, and spoken words can sometimes have different meanings based on inflection. Due to BERT’s understanding of the nuances of language, there will be a massive difference in how Google interprets spoken queries.

Is your site optimised for voice search? If not, check our blog to learn 5 Ways To Optimise For Voice Search.

This system can take its learnings from improvements in English (the language in which a vast majority of web content exists) and apply them to other languages in the future so Search can better return relevant results.

Although Google says optimising for BERT is “impossible”, here are several things that you can do to stay on top of the update:

Understand how people are searching, what they’re searching for, and what search results they want to see. You can use tools such as Google Autocomplete and Answer the Public for research, and then write content that caters to what your audience wants to know.

Although BERT is useful at analysing the context of words used inside sentences, it is still just a programme and can only understand so much. Avoid using flowery language in your content and write in a natural way that is easy to read so users can find it easily through search engines.

Keyword density might not be as important anymore given BERT’s better understanding of context. If you over-optimise, BERT might recognise your content to not actually be as relevant as it seems. However, that doesn’t mean you should disregard it entirely. Use tools like Google Keyword Planner or Google Trends to highlight what keywords you should be using and include only the most relevant ones in your content.

As New Zealand’s most awarded SEO agency, we know what it takes to optimise your site and make sure your business ranks well for your keywords. Confused about BERT? We can help you with your content marketing. Contact Pure SEO today to learn more.